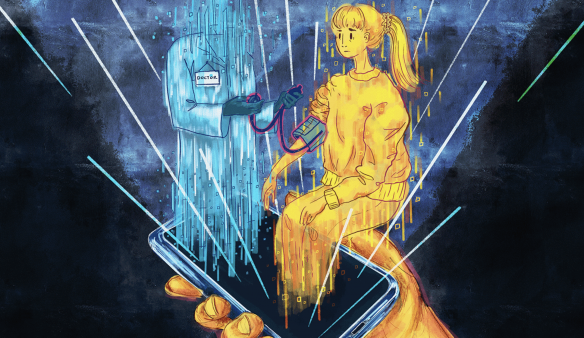

The use of smartphones as diagnostic tools is a work in progress, experts say.

By Hannah Norman and Kaiser Health News

The same devices used to take selfies and write tweets are being repurposed and marketed to quickly access the information needed to monitor a patient's health. A fingertip pressed against a phone's camera lens can measure heart rate. A microphone, kept by the bedside, can detect sleep apnea. Even the speaker is being used to monitor breathing using sonar technology.

In the best of this new world, data is transmitted remotely to a medical professional for the convenience and comfort of the patient or, in some cases, to assist a doctor without the need for expensive hardware.

But the use of smartphones as diagnostic tools is a work in progress, experts say. While doctors and their patients have had some real-world success implementing the phone as a medical device, the overall potential remains unrealized and uncertain.

Smartphones come with sensors capable of monitoring a patient's vital signs. They can help assess people for concussions, monitor for atrial fibrillation, and conduct mental health wellness checks, to name just a few emerging applications.

Companies and researchers eager to find medical applications for smartphone technology are leveraging the cameras and light sensors built into modern phones; microphones; accelerometers, which detect body movements; gyroscopes; and even speakers. The apps then use artificial intelligence software to analyze the collected images and sounds to create a seamless connection between patients and doctors. The profit potential and marketability are evident in the more than 350,000 digital health products available in app stores, according to a report by Grand View Research.

“It’s very difficult to place devices in a patient’s home or in the hospital, but everyone carries a cell phone with a network connection,” said Dr. Andrew Gostine, CEO of the sensor networking company Artisight. Most Americans own a smartphone, including more than 60% of people over 65, up from just 13% a decade ago, according to the Pew Research Center. The COVID-19 pandemic has also pushed people to become more comfortable with virtual care.

Some of these products have sought FDA approval to be marketed as medical devices. That way, if patients have to pay to use the software, health insurers are more likely to cover at least part of the cost. Other products are designated as exempt from this regulatory process, placed in the same clinical classification as a bandage. But the way the agency handles AI and machine learning-based medical devices is still being adjusted to reflect the adaptive nature of the software.

Ensuring accuracy and clinical validation is crucial to securing acceptance by healthcare providers. And many tools still need fine-tuning, said Dr. Eugene Yang, a professor of medicine at the University of Washington. Yang is currently testing contactless measurement of blood pressure, heart rate, and oxygen saturation obtained remotely through Zoom camera images of a patient's face.

Judging these new technologies is difficult because they rely on algorithms created by machine learning and artificial intelligence to collect data, rather than the physical tools typically used in hospitals. Therefore, researchers cannot "compare apples to apples" using medical industry standards, Yang said. The lack of such safeguards undermines the technology's ultimate goals of reducing costs and improving access, because a physician still needs to verify the results.

“False positives and false negatives lead to more testing and higher costs for the health care system,” he said.

Large technology companies like Google have invested heavily in researching this type of technology, catering to doctors and home caregivers, as well as consumers. Currently, in the Google Fit app, users can check their heart rate by placing their finger on the rear camera lens or track their breathing rate with the front camera.

“If you take the sensor out of a phone and take it out of a clinical device, they’re probably the same thing,” said Shwetak Patel, director of health technologies at Google and a professor of electrical and computer engineering at the University of Washington.

Google's research uses machine learning and computer vision, a field within AI that relies on information from visual inputs such as videos or images. So, instead of using a blood pressure cuff, for example, the algorithm can interpret subtle visual changes in the body that serve as proxies and biosignals for a patient's blood pressure, Patel said.

Google is also investigating the effectiveness of the built-in microphone in detecting heartbeats and murmurs and using the camera to preserve eyesight by detecting diabetic eye disease, according to information the company published last year.

The tech giant recently acquired Sound Life Sciences, a Seattle startup with an FDA-approved probe technology app. It uses a smart device's speaker to bounce inaudible pulses off a patient's body to identify movement and monitor breathing.

Binah.ai, based in Israel, is another company using a smartphone camera to calculate vital signs. Its software observes the region around the eyes, where the skin is slightly thinner, and analyzes the light reflected off blood vessels and back to the lens. The company is finalizing a clinical trial in the U.S. and marketing its wellness app directly to insurers and other healthcare providers, said company spokeswoman Mona Popilian-Yona.

The applications even extend to disciplines such as optometry and mental health:

- Using a microphone, Canary Speech employs the same underlying technology as Amazon's Alexa to analyze patients' voices for mental health issues. The software can be integrated with telemedicine appointments and allow doctors to assess anxiety and depression using a library of vocal biomarkers and predictive analytics, said Henry O'Connell, the company's CEO.

- ResApp Health, based in Australia, received FDA approval last year for its iPhone app that detects moderate to severe obstructive sleep apnea by listening to breathing and snoring. SleepCheckRx, which requires a prescription, is minimally invasive compared to the sleep studies currently used to diagnose sleep apnea. Those can cost thousands of dollars and require a variety of tests.

- Brightlamp's Reflex app is a clinical decision support tool used to manage concussions and vision rehabilitation, among other things. Using an iPad or iPhone camera, the mobile app measures how a person's pupils react to changes in light. Through machine learning analysis, the images provide clinicians with data points to evaluate patients. Brightlamp sells directly to healthcare providers and is used in more than 230 clinics. Physicians pay a standard annual fee of $400 per account, which is not currently covered by insurance. The Department of Defense has an ongoing clinical trial using Reflex.

In some cases, such as with the Reflex app, the data is processed directly on the phone, rather than in the cloud, said Brightlamp CEO Kurtis Sluss. By processing everything on the device, the app avoids privacy issues, since transmitting data elsewhere requires patient consent.

But algorithms need to be trained and tested by collecting large amounts of data, and that is an ongoing process.

Researchers, for example, have discovered that some computer vision applications, such as monitoring heart rate or blood pressure, can be less accurate for darker skin tones. Studies are underway to find better solutions.

Minor flaws in algorithms can also produce false alarms and frighten patients enough to prevent widespread adoption. For example, Apple's new car crash detection feature, available on both the latest iPhone and Apple Watch, was triggered when people boarded roller coasters and automatically dialed 911.

“We haven’t arrived yet,” Yang said. “That’s the final result.”